I realize that I was very unclear in my initial post (sorry!) What exact operation in particular do you think stores all elements of a sum in memory before adding them together? It seems you are implying that the MATLAB BLAS library does this for the A * x operation and you have some evidence of it.

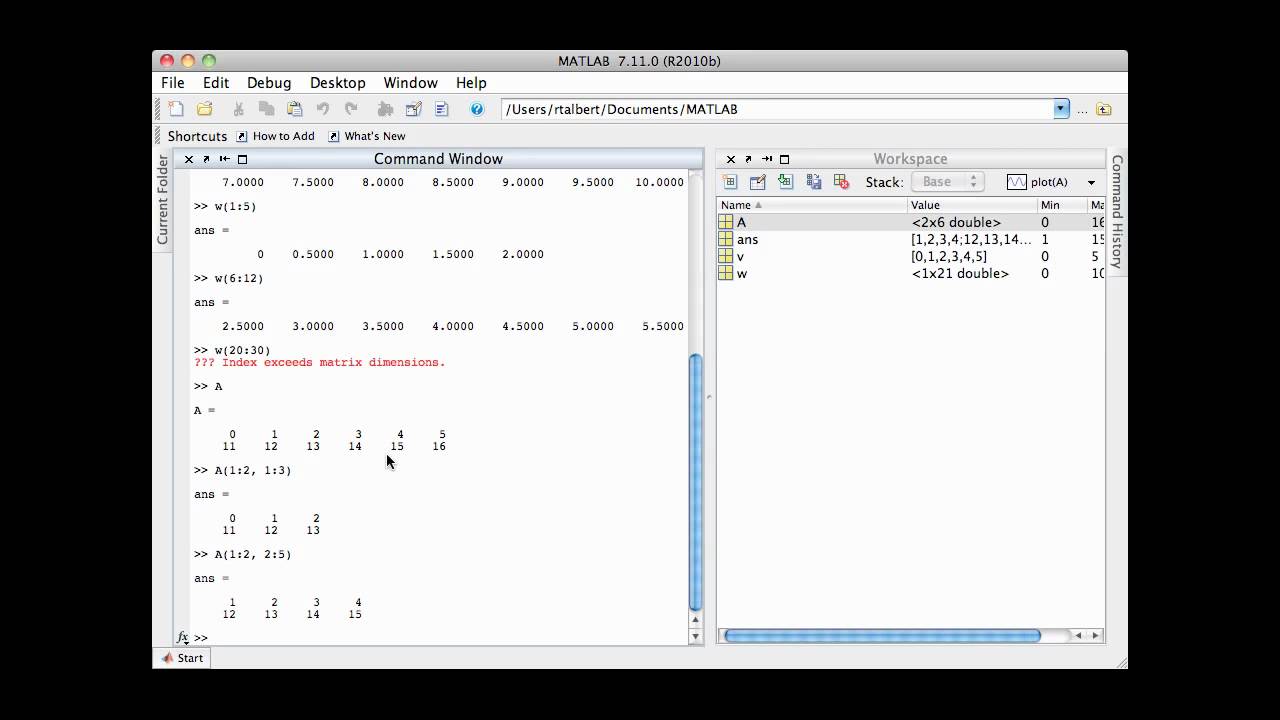

My experience in writing my mtimesx submission have shown only some limited cases involving complex variables with I doubt there is any significant large memory wasting code in the library. of the machine to optimize memory access etc. Presumably the writers of this library have taken into account the cache size etc. For full matrices, MATLAB calls a 3rd party highly optimized BLAS library for this (and similar) operations and it is unlikely you will be able to meet or beat this library for speed. Are you asking specifically about the operation A* x where A is a 2D matrix and x is a 1d vector? And are you asking how does MATLAB do this and could you do it more efficiently (or as efficiently) by hand coding your own and compiling it? If so, the answer generally is no. I have re-read your post several times now and am still not sure what your exact question is. In any case, use PROFILER is a recommended strategy to detect what take time in your code (note that the profiler only provides an approximation of what's going on, but it is largely enough to start with). If you need to read a file to build you matrix element, then building matrix element will surely cost more time than any matrix x vector multiplication, regardless the later is carried out with or without for-loop - by adding or using built-in function(s). The for-loop in C fortran is fast because it usually manipulates the basic type of the computer: a bared large chunk data array of numbers (Matlab array has an overhead before this bared array can be directly accessed by lower level code). Statements such as calling another function, creating a matrix, creating a scalar, deleting them all cost time, let alone reading from file. I'm hoping there's a way to code a for loop that's just as fast as matrix-vector multiplication but doesn't store unnecessary elementsįor loop by itself is not slow, what is slow is overhead due to code inside the iteration body. In each iteration of the for loop I might have to be calling an external function, or reading something from a file in order to figure out what is going to be added to the total sum, would this slow down my code considerably ? If that's true, then if I code a for loop summation and compile it to machine code (using FORTRAN or C) would it be just as fast as matrix-vector multiplication ? or is there something else that makes the matrix-vector multiplication more efficient ? In matlab, is the matrix-vector multiplication not itself just a for loop that is implemented efficiently since it was compiled to machine code ? In other words, the matrix-vector multiplication way stores ALL elements of the sum, even after some of them might not be needed anymore - while doing the summation in a for loop allows one to generate the elements on the fly, and delete them after they've been added to the total sum. Thereby saving on the storage costs of storing ALL elements of the sum in a big matrix.

I could delete elements of the summation as they are added to the total sum. If I were to do the summation in a for loop instead of using matrix-vector multiplication,

The problem I have is that when the matrices become extremely large, even the sparse representation takes up >100GB of memory, so its multiplication by a vector still ends up being slow. Where the for loop is actually more efficient than all vectorized alternatives,Īs a rule of thumb I think most people would agree that MATLAB's matrix multiplication is much faster than doing the summation in a for loop. I've had a problem that's been bothering me for a couple of weeks now and wanted to see other people's opinions,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed